Intro

Axiom is a really exciting new protocol that harnesses ZK technology to allow smart contracts to trustlessly compute over the history of Ethereum. I believe its a novel new primitive for others to build with. The docs provide a lot of info about the protocol itself and has a helpful tutorial that can be followed to build an Autonomous Airdrop. An SDK is provided to improve the integration experience for developers and includes a CLI, React client and Typescript and Smart Contract libraries.

One of the SC libraries provides an extension to the standard Foundry test library and has a pretty interesting setup and implementations of custom cheat codes. I thought it would be interesting to investigate this a bit further using the test from the Autonomous Airdrop example as a reference example, specifically looking at AxiomTest in some more detail.

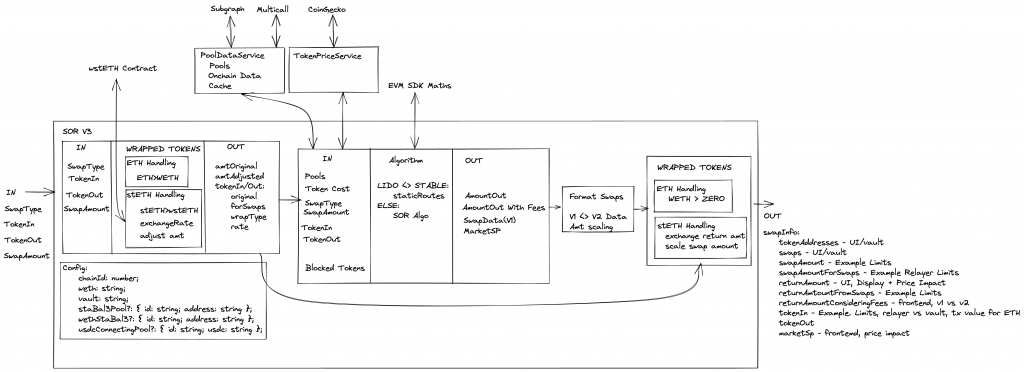

System Overview

To appreciate why the cheat codes are beneficial its useful to have a high level overview of the Axiom system. Following the flow of the Airdrop example:

- A query is sent to the

AxiomV2QuerycontractsendQueryfunction. In the Airdrop example this is sent by the user from the UI - The query format spec can be found here

- Will use the compute proof from an Axiom client Circuit

- The query arguments can be created in a number of ways using the SDKs, e.g. CLI, NodeJS, React

- Here the AxiomV2Callback is specified. This is what runs after query fulfillment

- Query Verification

- Offchain Axiom indexes the query

- Computes the result, and generate a ZK proof of validity

- Axiom calls

fulfillQueryon theAxiomV2Querycontract. - Onchain: verify zk proof onchain, check hashes, confirm mathes original query

- Calls the callback specified by the AxiomV2Callback in step 1

- Callback runs

- This allows a custom contract to make use of the results of the query and run custom logic

- In the Airdrop example the AutonomousAirdrop.sol contract validates the relevant airdrop requirements and issues the token if met

When testing locally the QueryFulfillment in step 3 will not be possible which would block testing of the custom logic implemented in the callback used in step 4. That’s where the AxiomTest library can be used.

Step By Step Testing

Following AutonomousAirdrop.t.sol can show us step by step how to use AxiomTest and allows us to investigate what is going on.

Importing

AxiomTest follows the same convention as a usual Foundry Test but instead we import AxiomTest.sol and inherit from AxiomTest in the test contract:

import { AxiomTest, AxiomVm } from "@axiom-crypto/v2-periphery/test/AxiomTest.sol";

contract AutonomousAirdropTest is AxiomTest { ...

Setup

setUp() is also the same as Foundry, an optional function invoked before each test case is run. Here there’s a bit more going on:

function setUp() public {

_createSelectForkAndSetupAxiom("sepolia", 5_103_100);

inputPath = "app/axiom/data/inputs.json";

querySchema = axiomVm.compile("app/axiom/swapEvent.circuit.ts", inputPath);

autonomousAirdrop = new AutonomousAirdrop(axiomV2QueryAddress, uint64(block.chainid), querySchema);

uselessToken = new UselessToken(address(autonomousAirdrop));

autonomousAirdrop.updateAirdropToken(address(uselessToken));

}

_createSelectForkAndSetupAxiom is found in the AxiomTest.sol contract. It basically initialises everything Axiom related on a local fork so the tests can be run locally.

- Setup and run a new local fork using

vm.createSelectFork(urlOrAlias, forkBlock)docs; - Using provided chainId find the addresses for axiomV2Core and axiomV2Query from local AxiomV2Addresses. These are actual deployments and currently only exist on mainnet/sepolia.

- Initialise core and query contracts using the addresses and interfaces:

axiomV2Core = IAxiomV2Core(axiomV2CoreAddress);

axiomV2Query = IAxiomV2Query(axiomV2QueryAddress);

- Initialise

axiomVm

axiomVm = new AxiomVm(axiomV2QueryAddress, urlOrAlias, true);

AxiomVm.sol implements the cheatcode functionality as well as providing utility functions for compiling, proving, parsing args, etc.

Following initialisation of the fork, the axiomVm compile function is used to compile the local circuit and retrieve the querySchema associated to the circuit. The querySchema provides a unique identifier for a callback function to distinguish the type of compute query used to generate the query results passed to the callback and this is used as a constructor argument when creating a new AutonomousAirdrop contract.

Behind the scenes compile is using Foundry FFI to run the Axiom CLI compile command:

npx axiom circuit compile _circuitPath --provider vm.rpcUrl(urlOrAlias) --inputs inputPath --outputs COMPILED_PATH --function circuit --mock

This outputs a JSON file which contains the querySchema. This value is parsed from the file and returned.

Testing SendQuery

The test test_axiomSendQuery covers step 1 in the System Overview above.

function test_axiomSendQuery() public {

AxiomVm.AxiomSendQueryArgs memory args =

axiomVm.sendQueryArgs(inputPath, address(autonomousAirdrop), callbackExtraData, feeData);

axiomV2Query.sendQuery{ value: args.value }(

args.sourceChainId,

args.dataQueryHash,

args.computeQuery,

args.callback,

args.feeData,

args.userSalt,

args.refundee,

args.dataQuery

);

}

Looking at AxiomVm sendQueryArgs we see it is again using Axiom CLI. This time via the functions _prove and _queryParams.

_prove runs the prove command:

npx axiom circuit prove circuitPath --mock --sourceChainId vm.toString(block.chainid) --compiled COMPILED_PATH --provider vm.rpcUrl(urlOrAlias) --inputs inputPath --outputs OUTPUT_PATH --function circuit

This will prove the previously compiled circuit and generate an JSON output file with the interface:

{

sourceChainId: string,

computeResults: string[], // bytes32[]

computeQuery: AxiomV2ComputeQuery,

dataQuery: DataSubquery[],

}

_queryParams then runs the query-params command:

npx axiom circuit query-params vm.toString(callbackTarget) --sourceChainId vm.toString(block.chainid) --refundAddress vm.toString(msg.sender) --callbackExtraData vm.toString(callbackExtraData) --maxFeePerGas vm.toString(feeData.maxFeePerGas) --callbackGasLimit vm.toString(feeData.callbackGasLimit) --provider vm.rpcUrl(urlOrAlias) --proven OUTPUT_PATH --outputs QUERY_PATH --args-map

This uses the output generate by the prove step (at OUTPUT_PATH) and generates the sendQuery arguments to a JSON file in the format:

{

value: bigint,

queryId: bigint,

calldata: string,

args,

}

This file is read and the args are returned as a string which are parsed in _parseSendQueryArgs and returned as a AxiomSendQueryArgs struct.

Finally sendQuery itself is called on the axiomV2Query contract initialised during setup using the parsed args.

Testing Callback

The test test_axiomCallback mocks step 3 in the System Overview and allows the callback to be tested.

function test_axiomCallback() public {

AxiomVm.AxiomFulfillCallbackArgs memory args =

axiomVm.fulfillCallbackArgs(inputPath, address(autonomousAirdrop), callbackExtraData, feeData, SWAP_SENDER_ADDR);

axiomVm.prankCallback(args);

}

Similar to the previous test fulfillCallbackArgs uses the Axiom CLI to prove and queryParams to generate the required args for AxiomFulfillCallbackArgs. These are used in prankCallback to call the axiomV2Callback function on the AutonomousAirdrop contract (args.callbackTarget is the address) with the relevant spoofed Axiom results:

IAxiomV2Client(args.callbackTarget).axiomV2Callback{gas: args.gasLimit}(

args.sourceChainId,

args.caller,

args.querySchema,

args.queryId,

args.axiomResults,

args.callbackExtraData

);

The axiomV2Callback function is inhertied from the AxiomV2Client and this function in turn calls _validateAxiomV2Call and _axiomV2Callback.

Conclusion

Following through these tests and libraries really helps to understand the moving parts in the Axiom system and hopefully the post helps others. Its exciting to see what gets built with Axiom as it becomes another core primitive!

Photo by David Travis on Unsplash